Here you are: your algorithm is running and producing its first result. Congratulations! However, your job as a data scientist is not over yet, and one of its most interesting parts is about to start: analysis!

In Computer Vision, we have the advantage of being able to visualize the results of our algorithms, which helps in debugging and iteration compared with other fields.

In this post, I’ll share some tips which helped me analyze computer vision experiments more efficiently. Hopefully they’ll help you too! In this first post, I’ll especially focus on image processing algorithms, stay tuned for tips specific to deep learning projects!

Tip 1: Log the different steps of your pipeline

As developers, we are used to log information about warnings and errors in our code. When working with algorithms, a good practice is to log intermediate results which can then help you analyze your pipeline. When working with images, another useful practice is to have by design the ability to visualize image modifications at each step of your pipeline.

The “by design” part is the trickiest one. One option which worked well in my project was to implement it with custom Python code: visualization can be a method from a class called every time an image is modified.

Practical example for image segmentation

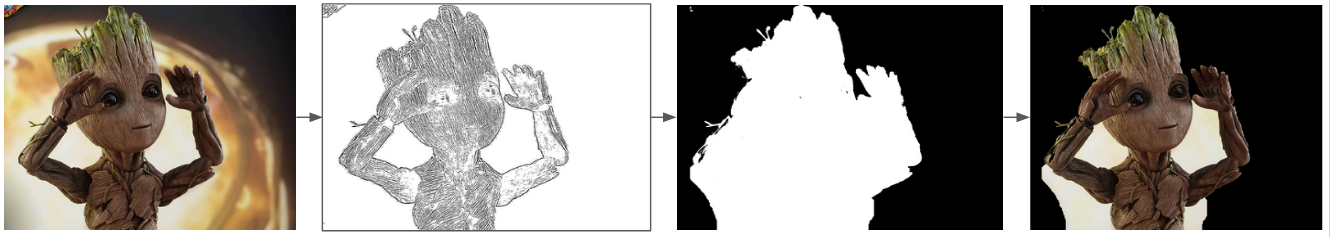

Let’s take an example: imagine we are working on a pipeline to segment objects from images as below:

When analyzing the resulting images, you will want to know what happened at each step:

Here, the segmentation did not go as planned but if you only have the two previous images, it will be hard to understand why. However, if you log all intermediate steps, it will be easier to analyze:

With intermediate results, it appears that the step which caused the segmentation error and should be investigated primarily is the third one.

Side note: having an adapted image opening tool is also a must to be efficient. If you’re working with Linux, I find Nomacs to be a good option!

If you want to reproduce these experiments, the code is available on Github!

The segmentation algorithm used here is GrabCut, which is initially an interactive foreground extraction algorithm implemented in OpenCV. You can checkout this article about automatic image segmentation open cv tutorial for more details!

Tip 2: Use cache to speed up iterations

An image processing pipeline often chains different steps. Some of them being longer to execute than other. When analyzing a result, you probably will want to test hypothesis quickly. Having:

1. A good vision of the chaining and duration of each step

If we go back to the previous example, and add a step to extract the contours of the segmented object, we would get a flow like the one below:

In this example, the segmentation step is the longest one, with a run time of around 10s.

You can get such a graph after a few runs of your pipeline. If you need something more precise, you can add timed logs in your pipeline or even use a profiling tool.

2. A cache tool to avoid running again steps which were not modified

In a previous project, we used Cachier as a tool to cache single steps in our pipeline. You can use it by adding a decorator above the functions that you want to cache:

This decorator, combined with the knowledge of the time consuming steps of the pipeline, helped me test hypothesis quickly during analysis!

An alternative, especially relevant for Deep Learning projects, is DVC and DVC pipelines.

Tip 3: Setup a versioning flow for your experiments

An experiment outcome is dependent on different elements: the code and parameters used to launch it, but also the data it was ran on. Aside from reproducibility, being able to version these elements will save you a lot of time if you ever want to go back to a previous iteration.

For code and parameters, git is the usually the agreed upon way to manage versioning.

For data, and in Computer Vision images more precisely, the first step is to store images in a shared storage, be it on premise or with a cloud provider. This will allow you to share experiments with your teams.

Next, to track changes in the data, a great tool is again DVC, which stands for Data Version Control, the equivalent of git for data versioning. To learn more about DVC, you can watch this intro video on Youtube.

Tip 4: Store and Format your analysis and results

You might want to go back to previous analysis, and quickly browse through previous results. Having an agreed upon interface within your team to structure and store analysis will save you time.

For instance, Notion templates and tables are an efficient and user friendly tool to standardize, store and share analysis results. Many other tools exist out there and could serve the same purpose!

Conclusion

I hope that these tips were useful! They do not cover the whole scope of Computer Vision experiment analysis but only the tooling parts on which I got to improve.

I would love to hear your thoughts about them, I am sure there is still a lot to improve don't hesitate to contact-us !